Accelerating Deep Learning on Edge Devices: Hardware-Software Co-Design for Efficient Neural Networks

Keywords:

Deep Learning, Edge Computing, Hardware-Software Co-Design, Neural Network Acceleration, Energy Efficiency, Model Optimization, Edge AIAbstract

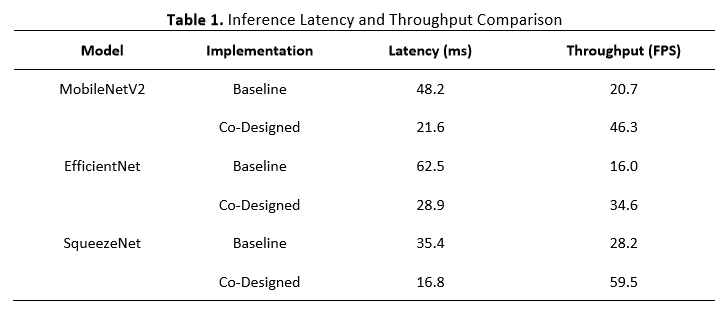

The rapid growth of deep learning applications on edge devices has created significant challenges related to computational complexity, energy consumption, and latency. Unlike cloud-based systems, edge devices operate under strict resource constraints, including limited processing power, memory capacity, and energy availability. These limitations necessitate efficient strategies to enable real-time and reliable deep learning inference at the edge. As a result, hardware-software co-design has emerged as a promising approach to optimize neural network performance while maintaining energy efficiency and deployment feasibility. This paper investigates a hardware-software co-design framework for accelerating deep learning models on edge devices. The proposed approach jointly optimizes neural network architectures and underlying hardware platforms by integrating model compression techniques, such as quantization and pruning, with hardware-aware design strategies. Custom accelerators, parallel processing architectures, and memory-efficient dataflows are considered to minimize latency and power consumption while preserving inference accuracy. Experimental evaluations demonstrate that the co-designed system achieves significant improvements in inference speed and energy efficiency compared to conventional hardware-agnostic implementations. The results indicate reduced computational overhead and memory access costs, making the proposed framework suitable for real-time edge intelligence applications such as Internet of Things (IoT), autonomous systems, and mobile computing. Overall, this study highlights the importance of collaborative hardware-software optimization in addressing the performance and efficiency challenges of deploying deep learning models on edge devices. The findings provide practical insights for designing scalable and energy-efficient edge AI systems and contribute to the advancement of next-generation intelligent edge computing

Downloads

References

M. Shuvo, S. Islam, J. Cheng, and B. Morshed, “Efficient Acceleration of Deep Learning Inference on Resource-Constrained Edge Devices: A Review,” Proc. IEEE, vol. 111, pp. 42–91, 2023, doi: 10.1109/jproc.2022.3226481.

J. Sander, A. Cohen, V. Dasari, B. Venable, and B. Jalaian, “On Accelerating Edge AI: Optimizing Resource-Constrained Environments,” ArXiv, vol. abs/2501.15014, 2025, doi: 10.48550/arxiv.2501.15014.

T. Tatarnikova and A. Raskopina, “Analyzing the effectiveness of post-learning quantization for optimizing neural networks,” H&ES Res., 2025, doi: 10.36724/2409-5419-2025-17-2-4-10.

D. Ngo, H.-C. Park, and B. Kang, “Edge Intelligence: A Review of Deep Neural Network Inference in Resource-Limited Environments,” Electronics, 2025, doi: 10.3390/electronics14122495.

A. Shawahna, S. Sait, and A. El-Maleh, “FPGA-Based Accelerators of Deep Learning Networks for Learning and Classification: A Review,” IEEE Access, vol. 7, pp. 7823–7859, 2019, doi: 10.1109/access.2018.2890150.

Chakraborty Anindita, Ghosh Shivnath, B. Kumar, Mandal Sampurna, Chakraborty Pranashi, and Paul Sreelekha, “‘Resource-Aware Deep Learning: Neural Network Optimization for Edge Devices: A Review,’” Int. J. Environ. Sci., 2025, doi: 10.64252/yc79fn98.

A. J. Tyagi, “Hardware/Software Co-design: Addressing Uncertainty in Platform Development through Workload Modeling and Bottleneck Feedback Loops,” Int. J. Recent Innov. Trends Comput. Commun., 2023, doi: 10.17762/ijritcc.v11i5.11705.

J. Haris, P. Gibson, J. Cano, N. Agostini, and D. Kaeli, “SECDA: Efficient Hardware/Software Co-Design of FPGA-based DNN Accelerators for Edge Inference,” 2021 IEEE 33rd Int. Symp. Comput. Archit. High Perform. Comput., pp. 33–43, 2021, doi: 10.1109/sbac-pad53543.2021.00015.

M. Capra, B. Bussolino, A. Marchisio, G. Masera, M. Martina, and M. Shafique, “Hardware and Software Optimizations for Accelerating Deep Neural Networks: Survey of Current Trends, Challenges, and the Road Ahead,” IEEE Access, vol. 8, pp. 225134–225180, 2020, doi: 10.1109/access.2020.3039858.

T. Leppänen, A. Lotvonen, and P. Jääskeläinen, “Cross-vendor programming abstraction for diverse heterogeneous platforms,” vol. 4, 2022, doi: 10.3389/fcomp.2022.945652.

B. Zoph, V. Vasudevan, J. Shlens, and Q. Le, “Learning Transferable Architectures for Scalable Image Recognition,” 2018 IEEE/CVF Conf. Comput. Vis. Pattern Recognit., pp. 8697–8710, 2017, doi: 10.1109/cvpr.2018.00907.

E. Husom et al., “Sustainable LLM Inference for Edge AI: Evaluating Quantized LLMs for Energy Efficiency, Output Accuracy, and Inference Latency,” ACM Trans. Internet Things, vol. 6, pp. 1–35, 2025, doi: 10.1145/3767742.

Y. Mao, X. Yu, K. Huang, Y.-J. A. Zhang, and J. Zhang, “Green Edge AI: A Contemporary Survey,” Proc. IEEE, vol. 112, pp. 880–911, 2023, doi: 10.1109/jproc.2024.3437365.

W. Jiang et al., “Device-Circuit-Architecture Co-Exploration for Computing-in-Memory Neural Accelerators,” IEEE Trans. Comput., vol. 70, pp. 595–605, 2019, doi: 10.1109/tc.2020.2991575.

R. Yu et al., “A full-stack memristor-based computation-in-memory system with software-hardware co-development,” Nat. Commun., vol. 16, 2025, doi: 10.1038/s41467-025-57183-0.